Autonomous Industrial Patrol Ground Robotics System

Description

The system is a cyber-physical stack for autonomous patrolling of industrial sites using ground robots, combining global and local perception to enable reliable operation in dynamic environments. The main computing unit is a compact industrial PC with multiple Ethernet interfaces, enabling separation of sensing subsystems into independent networks and supporting high-bandwidth data streaming. Navigation and obstacle perception are based on multiple 3D ranging sensors, complemented by a multi-constellation satellite-based positioning system with redundant antennas for robust and accurate localization. Vision sensors provide high-resolution visual data for object detection and classification, while a cellular communication module ensures low-latency, high-throughput connectivity with the control center for real-time data and video transmission.

Autonomous Ground Robotics System for Industrial Inspection

The system is a cyber-physical stack for autonomous patrolling of industrial sites using ground robots. It combines global awareness with real-time local perception to ensure reliable operation in dynamic, real-world conditions — including areas where GNSS is unreliable or unavailable.

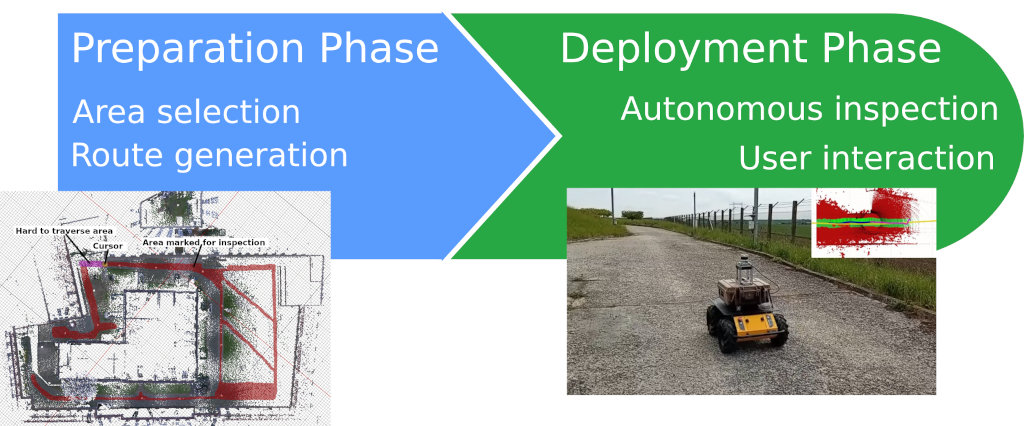

The system is designed as a two-phase pipeline: an offline preparation phase and an autonomous operational phase. Together, they allow robots to repeatedly inspect large areas while adapting to changes in their surroundings.

Preparatory Phase: Environment Setup

Mapping and Annotation

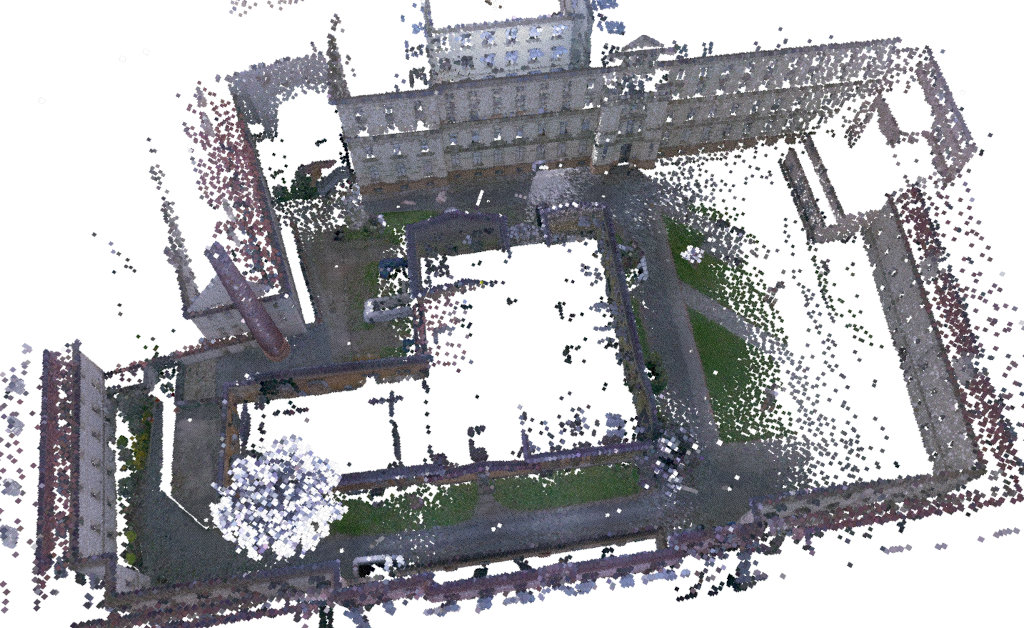

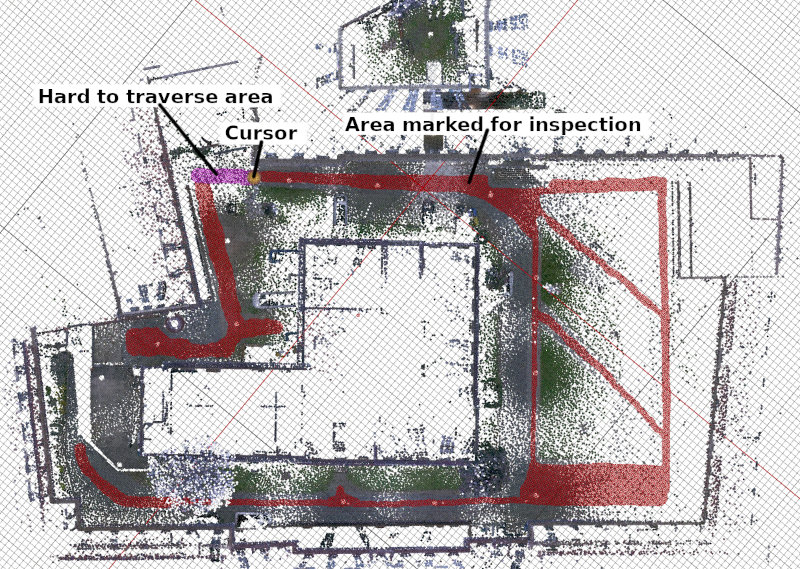

The inspection area is first captured as a 3D point cloud, for example using a LiDAR-equipped robot or surveying equipment. The data is projected into a floor plan representation, where an operator defines accessible regions and areas of interest for inspection.

Inspection Route Generation

Based on the annotated map, the system automatically generates a set of patrol routes:

- Inspection routes ensure coverage of all relevant areas

- Return routes enable safe navigation back to the base station

The system produces multiple route variants, allowing each mission to follow a different trajectory and avoid predictable patterns, to improve robustness and security.

Outputs of the Preparatory Phase

- 3D map of the inspection environment

- Annotated floor plan

- Set of inspection routes

- Set of return routes

Operational Phase: Autonomous Patrol

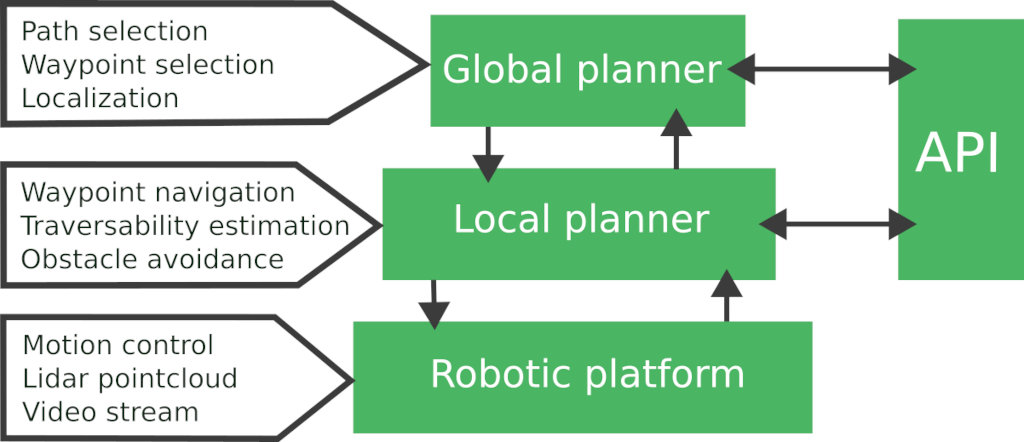

During operation, the robot executes inspection missions autonomously. The system architecture is organized into three cooperating layers:

- Global Planning — mission management and route selection

- Local Planning — real-time navigation and obstacle handling

- Motion Control — execution of movement commands

All layers are accessible through an API for monitoring and operator interaction.

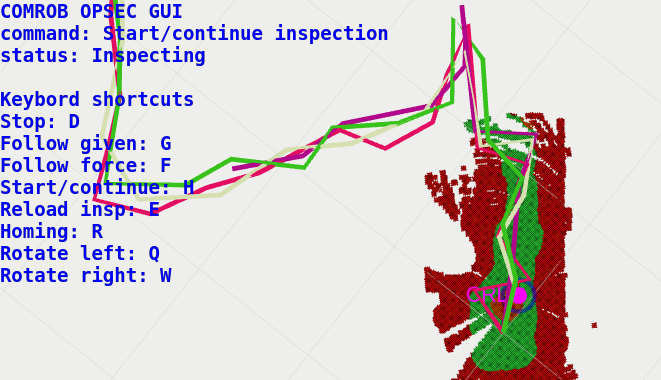

Global Planning

The global planner manages the mission lifecycle:

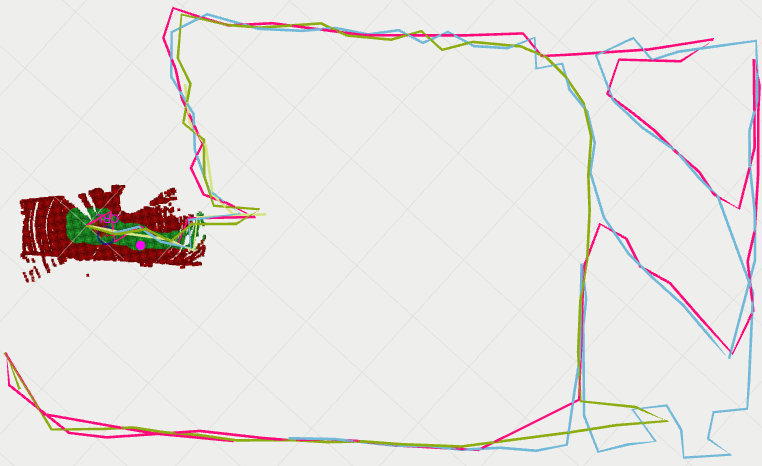

- Selects inspection routes based on the robot’s position.

- Alternates routes between missions to ensure variability.

- Switches to a return route when required, such as low battery.

Each route is defined as a sequence of waypoints that the robot must visit.

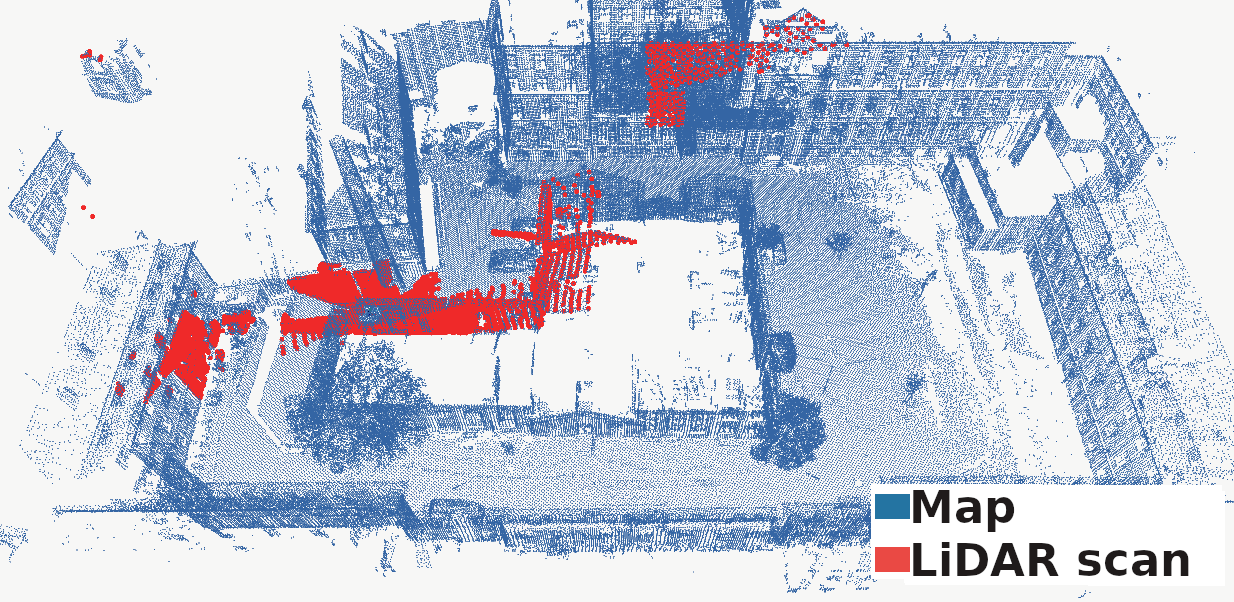

Robust Localization

Accurate localization is essential for reliable navigation. While GNSS can be used in open environments, it is often unreliable near buildings or indoors.

The Patrol Stack addresses this by combining:

- LiDAR-based environment perception

- Inertial sensing

- Pre-built 3D maps of the environment

This enables precise, real-time localization, even in GNSS-denied or degraded conditions.

Local Planning

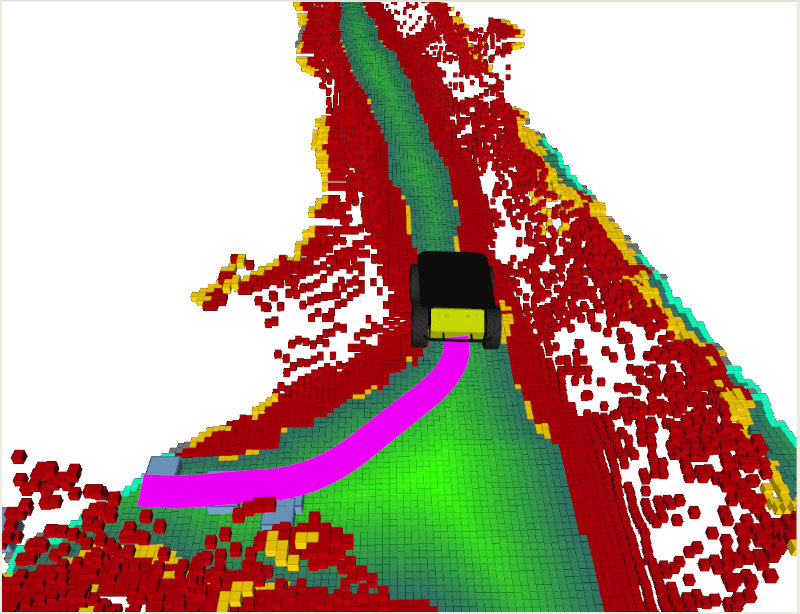

The local planner guides the robot toward each waypoint while adapting to current conditions in the environment.

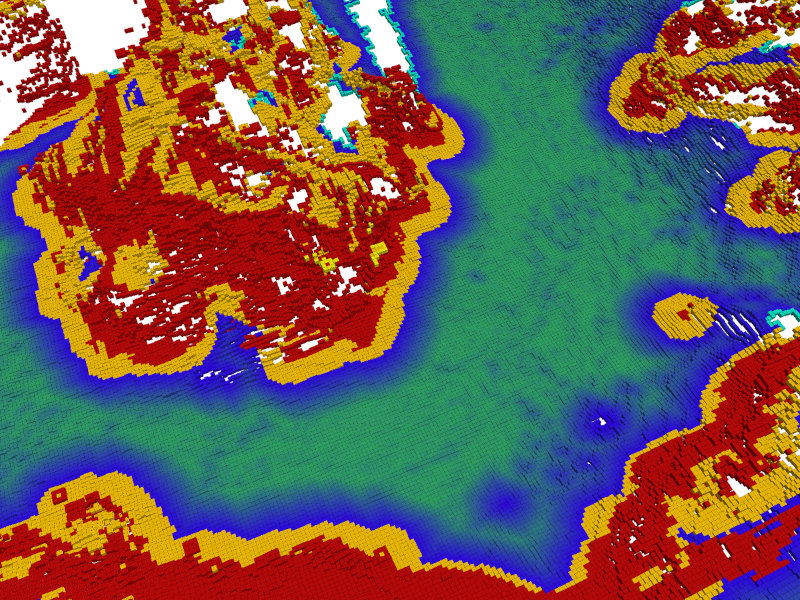

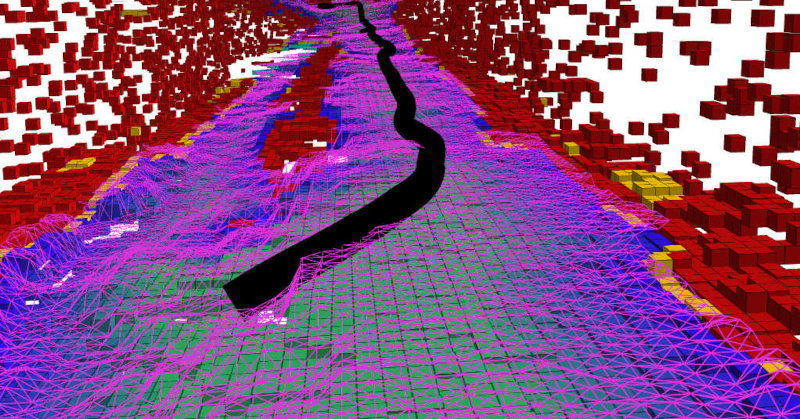

Environment Awareness

As the robot moves, it continuously builds a local model of its surroundings using onboard sensors. This model is used to distinguish between:

- Traversable terrain

- Obstacles and restricted areas

This allows the robot to react to changes such as newly placed obstacles or terrain variations.

Path Planning and Execution

Using the local environment model, the system computes a safe path toward the next goal. A dedicated control module then translates this path into motion commands for the robot platform.

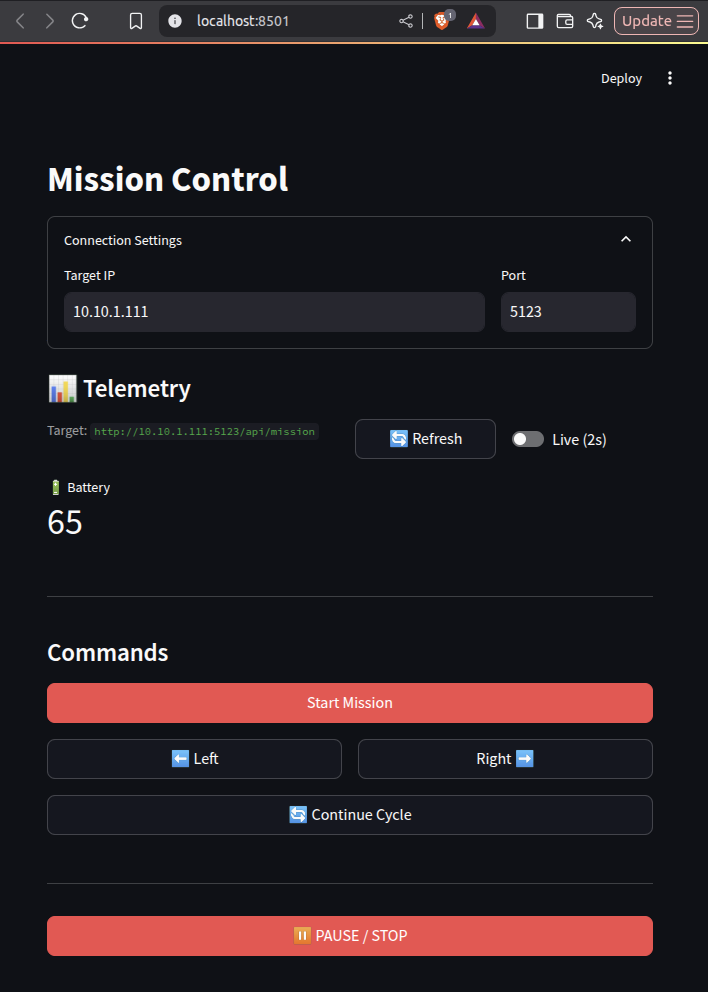

API and Operator Interaction

The system provides an API for integration and supervision, offering:

- Access to robot telemetry (position, battery, sensor status)

- Remote mission control (start, stop, route selection)

- Integration with custom applications

Client libraries are available in Python and C++, enabling development of graphical interfaces and monitoring tools.

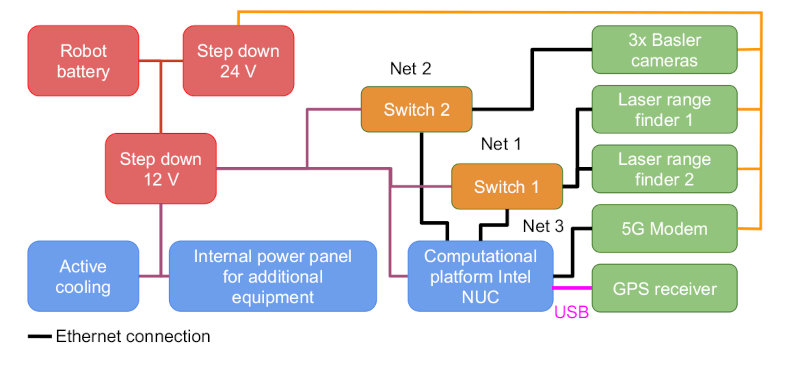

Navigation Box

The Navigation Box is a self-contained sensing and computing unit designed for autonomous operation in industrial environments. It integrates perception, localization, and communication into a single deployable system.

Perception and Sensing

The system combines multiple sensing modalities to achieve robust environment understanding:

- 3D LiDAR sensors for full-surround perception and obstacle detection

- GNSS receiver for global positioning and initialization

- RGB cameras for visual awareness and inspection tasks

Onboard Computing

All data processing is performed onboard using an industrial computing unit, enabling:

- Real-time perception and decision-making

- High-throughput sensor data handling

- Reliable operation without external infrastructure

Connectivity

A high-speed communication module provides:

- Remote monitoring and control

- Real-time data streaming

- Integration with external systems

Hardware Overview

The Navigation Box integrates:

- Multi-constellation GNSS receiver

- Dual LiDAR setup for long- and short-range perception

- Industrial cameras for visual sensing

- Onboard computing unit with high memory and storage capacity

- High-speed networking and communication modules